01

Bronze ingestion

Raw tables preserve source payloads and load metadata so failed downstream transformations can be replayed without asking each source owner for another extract.

A governed Snowflake warehouse that standardizes raw operational data into bronze, silver, and gold layers for planning, operations, BI, and AI workloads.

Tools used

Architecture

01

Raw tables preserve source payloads and load metadata so failed downstream transformations can be replayed without asking each source owner for another extract.

02

Type casting, deduplication, entity resolution, and source-specific validation happen before data is exposed to application or dashboard layers.

03

Business tables expose stable dimensions and facts for shipment monitoring, contract realization, coal stock, supplier performance, and planning inputs.

Work showcase

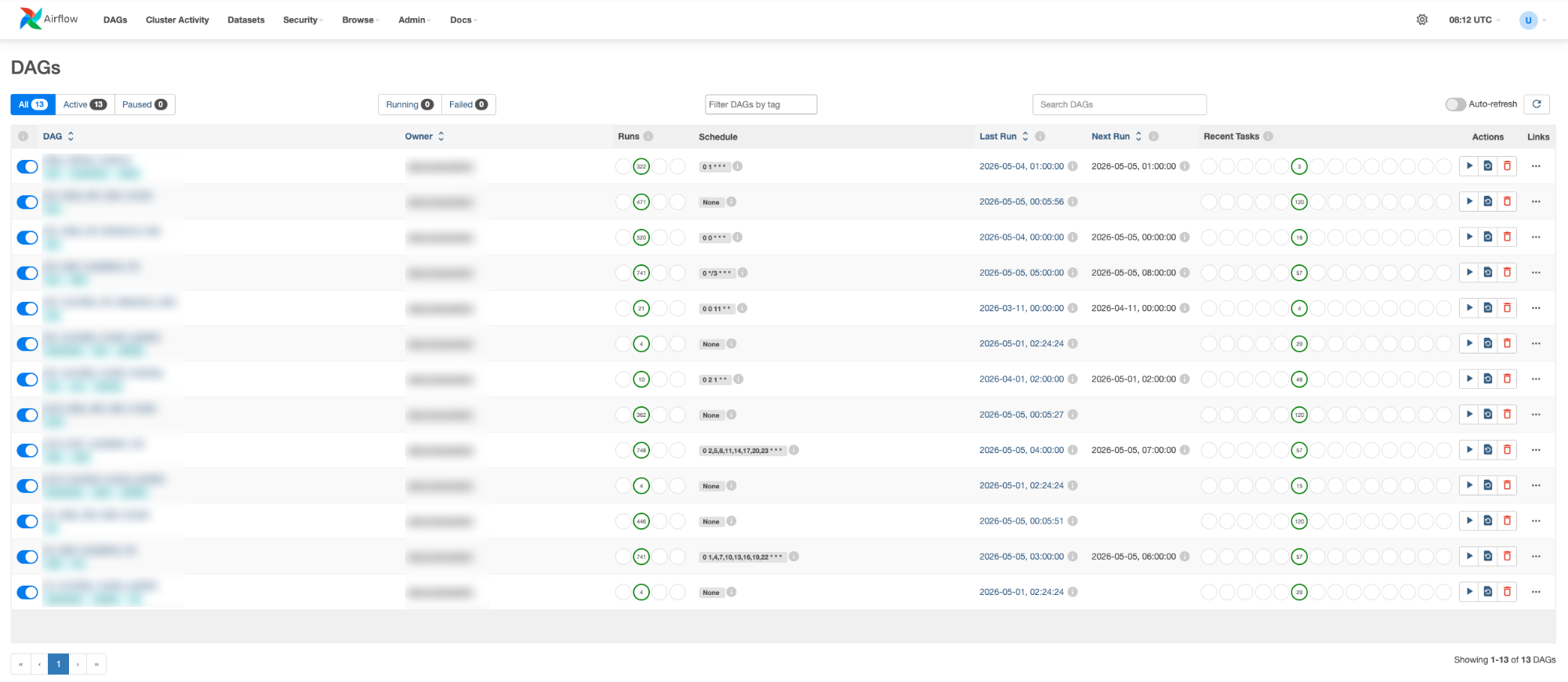

Unified observability across freshness, data quality checks, and data catalog — one view for the full warehouse health.

Related PLN EPI work

Decision layer

A machine learning and optimization platform for forecasting demand, modeling coal supply constraints, and recommending allocation plans across PLN EPI operations.

Open detailWorkflow layer

An operational platform that connects coal contracts, shipments, plant stock, billing, and monitoring workflows into one data-backed system.

Open detailAccess layer

A natural-language analytics agent connected to governed operational data, allowing users to ask business questions while keeping answers grounded in approved warehouse models.

Open detail